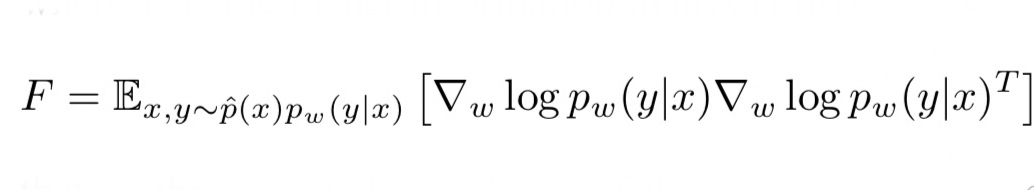

Selecting a feature extractor with task embedding obtains a performance close to the best available feature extractor, while costing substantially less than exhaustively training and evaluating on all available feature extractors. We present a simple meta-learning framework for learning a metric on embeddings that is capable of predicting which feature extractors will perform well. TASK2VEC embedding using a probe network Feel free to upload your own helpful guides here as well Columns that have 2 or more Statuses are divided so as to. Note that when computing the Task2Vec embedding (second for loop), the soft. Task2vec: Task embedding for meta-learning A Achille, M Lam, R Tewari, A Ravichandran, S Maji, CC Fowlkes. We demonstrate that this embedding is capable of predicting task similarities that match our intuition about semantic and taxonomic relations between different visual tasks (e.g., tasks based on classifying different types of plants are similar) We also demonstrate the practical value of this framework for the meta-task of selecting a pre-trained feature extractor for a new task. and a pre-trained classifier, we follow Algorithm 5 to compute our embedding. This provides a fixed-dimensional embedding of the task that is independent of details such as the number of classes and does not require any understanding of the class label semantics. Given a dataset with ground-truth labels and a loss function defined over those labels, we process images through a “probe network” and compute an embedding based on estimates of the Fisher information matrix associated with the probe network parameters. We introduce a method to provide vectorial representations of visual classification tasks which can be used to reason about the nature of those tasks and their relations. The challenge in this assignment is to catalog the range of proposed ideas to address this key problem, identify which are most promising, and potentially implement some of them to perform an in-depth study on how what really works in a variety of real-world settings.ArXiv_AI Embedding Classification Relation This is a very fundamental problem in machine learning. Another technique from continual learning is to keep a memory of models trained on previous tasks, and try all of them on the new tasks to see which ones are worthy starting points for learning the new task. In: 2019 IEEE/CVF International Conference on Computer Vision (ICCV). Many involve 'task embeddings' that map a given dataset/task to a vector representation. Achille, Alessandro and Lam, Michael and Tewari, Rahul and Ravichandran, Avinash and Maji, Subhransu and Fowlkes, Charless and Soatto, Stefano and Perona, Pietro (2019) Task2Vec: Task Embedding for Meta-Learning. Bash uses the value of the variable formed from the rest of parameter as the name of the variable this variable is then expanded and that value is used in the rest of the substitution, rather. This probe network is shared across tasks and allows Task2Vec to estimate the Fisher Information Matrix of different image datasets. The Bash (4.1) reference manual says: If the first character of parameter is an exclamation point (), a level of variable indirection is introduced. There are a range of techniques to establish task similarity (see the links below). perspective, Task2Vec (Achille et al., 2019) generates task embeddings for a given task using the Fisher Information Matrix associated with a pre-trained probe network. proposes Task2Vec, a task embedding based on the estimation of the Fisher information matrix associated with parameters in the so-called probe network that. Meta-learning aims to do this even more efficiently by training over distributions of very similar prior tasks.Ī key question here is "When is a previous task similar enough so that I can effectively transfer information from it"? If the task is similar, the transferred priors can speed up learning dramatically, but it is different, such as prior can make learning the new task much less efficient. Transfer learning and continual learning aim to select and transfer previously learned representations/embeddings so that new tasks can be learned quickly. Several areas of machine learning aim to mimic this ability. For instance, a child first learns how to walk, and then efficiently learns how to run (obviously without starting from scratch). Humans are very efficient learners because we can very efficiently leverage prior experience when learning new tasks. Back to list Project: Task similarity and transferability in machine learning Description

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed